Creating Trustworthy AI

Author: |

Steve Venema |

Created at: |

Feb 2023 |

Updated at: |

Feb 2023 |

AI technologies seem to be used almost everywhere these days and, as technology providers, we need to be aware of the potential for bias and to work to mitigate harms it can cause. In March 2022, NIST published SP 1270, Towards a Standard for Identifying and Managing Bias in Artificial Intelligence to help collate and communicate current research and understanding on this complex and subtle topic. I’ll review this and related documents in this article.

Examples of Harmful Effects

In SP 1270, NIST provides many examples where such bias has caused harm and negatively impacted lives in various situations, particularly in the areas of employment, health care and criminal justice. They also include nearly 30 related references to external publications in the bibliography.

One example (reference [6]) is titled, Objective or Biased; it describes what a team of reporters from Bayerischer Rundfunk (German Public Broadcasting) found when they studied an AI product marketed to employers as a screening tool. The product was marketed as an aid to …identify the personality traits of job candidates based on short videos. With the help of Artificial Intelligence (AI) they are supposed to make the selection process of candidates more objective and faster. The AI produced relative measures of Openness, Conscientiousness, Extraversion, Agreeableness and Neuroticism.

The study used actors to record short interview videos using the same script, speech intonation, and expressions while varying the actor’s appearance (eyeglasses, headscarf), their background (white background or a mirror behind the actor), the scene lighting, etc. across recordings. The reporters discovered that the software made quite significant and seemingly inexplicable changes to its personality profile outputs for these input variations. Hardly objective it seems.

The public often perceives such examples such as these as evidence that AI technologies (machine learning, facial recognition, etc.) cannot be trusted. These examples show that these concerns are not unfounded.

Establishing Public Trust

SP 1270 identifies public trust as an important challenge to extracting value from AI-based systems. They define the following key characteristics for gaining and maintaining public trust:

-

Accuracy

-

Explainability & Interpretability

-

Privacy

-

Reliability

-

Robustness

-

Safety

-

Security Resilience

-

Mitigation or control of harmful biases

Most of these terms are self-explanatory, but it’s worth taking a moment to think about Explainability & Interpretability in particular. NISTIR 8312, Four Principles of Explainable Artificial Intelligence (Sept., 2021) goes into great detail on this topic, but here are my simplified definitions:

-

Explainability: The AI system provides evidence that shows the reason behind a given conclusion or finding.

-

Interpretability: The explanations for a given finding are understandable by - and meaningful to – the intended audience.

Both of these characteristics may be difficult to achieve in the case of lay audiences, which is one of the challenges with applying AI technologies to public use cases that require public transparency and/or accountability.

How Does Bias Happen?

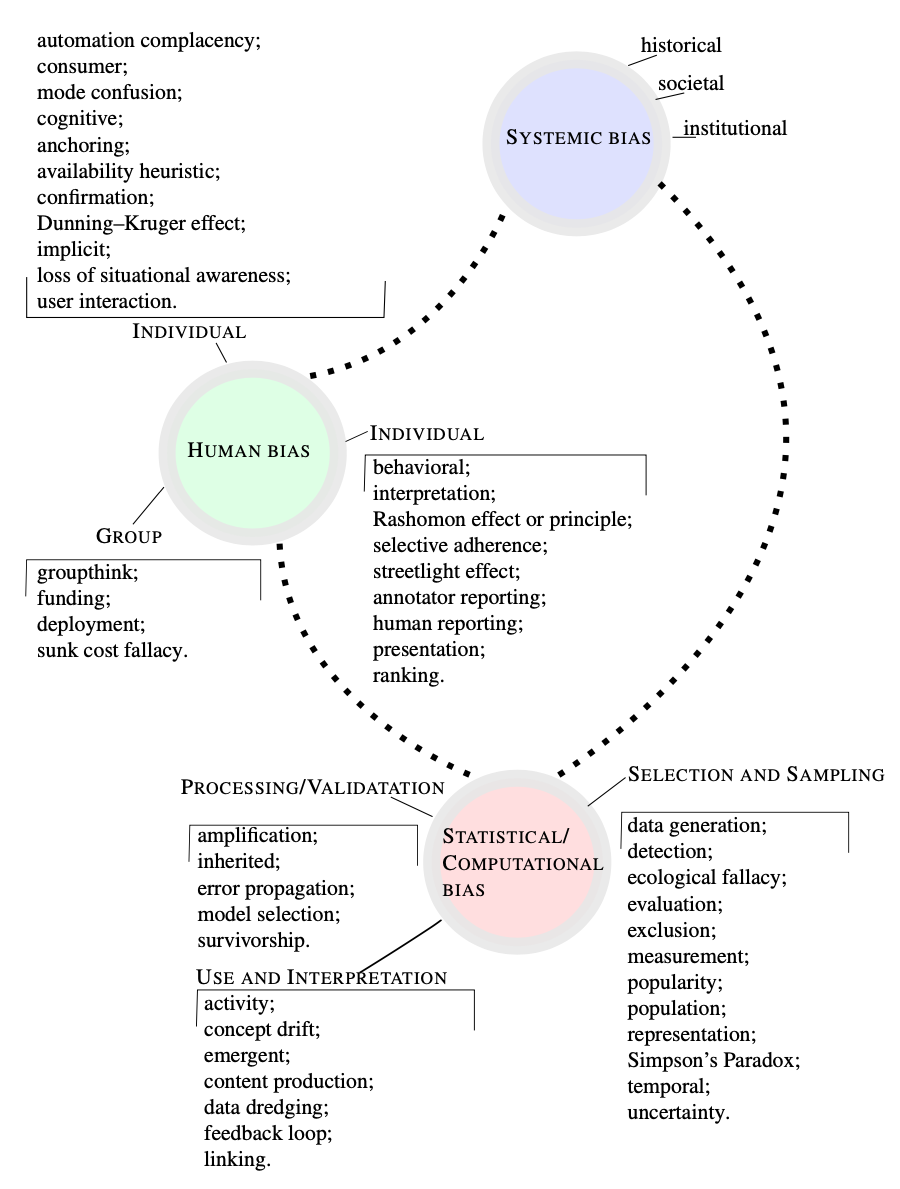

SP 1270 defines three broad categories of bias: Systemic Bias, Human Bias, and Statistical / Computational Bias. These are further divided into many specific types of bias, as shown in SP 1270 Figure 2 (reproduced below).

Much of the document is devoted to defining each of these types of bias and how they occur. Some examples:

-

Activity Bias: “A type of selection bias that occurs when systems/platforms get their training data from their most active users, rather than those less active (or inactive)

-

Temporal Bias: “Bias that arises from differences in populations and behaviors over time”

-

Uncertainty Bias: “Arises when predictive algorithms favor groups that are better represented in the training data, since there will be less uncertainty associated with those predictions.”

Understanding these various forms of bias is important to avoiding or mitigating bias in an AI-driven system; reviewing SP 1270 is a great place to start.

Bias Is Not New

I attended a virtual NIST Workshop in August 2022 titled, “Mitigating AI Bias in Context”. A recording and slides from the workshop are available at this link. The workshop consisted of several academic presentations as well as NIST presenting some of their recent and upcoming work in this area.

One presenter, Dr. Itiel Dror of University College London, discussed sources of bias for both humans and AI. He referenced his 2020 paper which includes a list of six fallacies regarding why people believe they themselvesare not biased. I’ve included a summary from that paper below as good food-for-thought on whether and how you too might be susceptible to fallacious thinking on human bias:

Six Human Bias Fallacies

| Fallacy | Incorrect Belief |

|---|---|

Ethical Issues |

It only happens to corrupt and unscrupulous individuals, an issue of morals and personal integrity, a question of personal character. |

Bad Apples |

It is a question of competency and happens to experts who do not know how to do their job properly. |

Expert Immunity |

Experts are impartial and are not affected because bias does not impact competent experts doing their job with integrity. |

Technological Protection |

Using technology, instrumentation, automation, or artificial intelligence guarantees protection from human biases. |

Blind Spot |

Other experts are affected by bias, but not me. I am not biased; it is the other experts who are biased. |

Illusion of Control |

I am aware that bias impacts me, and therefore, I can control and counter its effect. I can overcome bias by mere willpower. |

Like humans, AI systems can also suffer from biases. These biases may stem from issues like:

-

Poor assumptions behind the problem that the AI system is tasked with solving

-

Bias in the data used to train the AI model

-

Inappropriate application of the AI model

-

Misinterpretation of (or overconfidence in) the AI system’s results

Dr. Dror goes on to say that a combination of Human and AI working together (what he calls Distributed Cognition) may yield less bias than either alone. This hypothesis aligns well with my belief that we should always reserve a way for a human to review and perhaps override an AI-based result or decision. In short, we should do the following:

Always leave room for human operators of AI-based systems to say, “Hmm, that doesn’t look right” and not just blindly trust the results (“The computer told me X, therefore X must be true”).

Risks Associated with AI Bias

As discussed earlier, bias can cause harm to individuals and/or classes of individuals in the form of inequities and lack of fairness in the results generated by AI systems. One can view this as a form of risk which needs to be managed and mitigated where possible. In addition, there are potential secondary effects of these harms: litigation by people who feel they have been harmed, and a loss of trust in your company’s products and its brand.

NIST has developed an AI Risk Management Framework (RMF) to help companies evaluate these risks. The AI RMF 1.0 was published in January 2023, along with a draft RMF Playbook and Explainer Video. In their own words, AI systems can amplify, perpetuate, or exacerbate inequitable outcomes. AI systems may exhibit emergent properties or lead to unintended consequences for individuals and communities. These resources are chock-full of useful information and ideas on how to manage your company’s risks associated with using AI technologies.

Call to Action

End Users of AI-enabled products should do the following:

-

Familiarize yourself with bias and fairness using resources like those discussed above.

-

Ask your AI-enabled product suppliers about the explainability and interpretability of their product’s outputs, as well as the ability for human operators to override AI outputs if circumstances warrant.

-

Analyze how your use of a given AI system could cause harm to your users and develop a plan to mitigate these harms.

-

Implement a plan to detect and remediate emergent issues due to bias and fairness through human intervention as necessary.

Developers of AI systems and products should:

-

Learn to identify sources of bias using resources like the ones discussed above.

-

Search for sources of bias in your systems part of your development lifecycle.

-

Make the outputs of your AI systems explainable and interpretable so that users can trace how a given output came about.

-

Build into your AI system the ability for human users to be able to supervise and override AI outputs when appropriate.

Note that items 2 and 3 respectively in the above two lists reference a subfield of AI called Explainable AI (XAI), which is still in active development and often difficult to implement. For in-depth information on XAI, I recommend reviewing the paper, Explainable AI (XAI): A Systematic Meta-Survey of Current Challenges and Future Opportunities (November, 2021) as well as the book, Interpretable Machine Learning (December, 2022). Regardless of how difficult it might be to implement, explainability and interpretability (sometimes called “understandability”) are highly desirable traits for trustworthy AI applications.

ForgeRock’s AI-Enabled Products

At ForgeRock, we currently offer two AI-enabled products:

-

ForgeRock Autonomous Identity, which uses AI/ML to understand who or what should have access with enough confidence to automatically approve, provision, and certify.

-

ForgeRock Autonomous Access, which uses AI/ML to continuously inspect and adapt real-time access based on behavior; it also orchestrates real-time response and remediation.

Both of these products are based on an ML foundation that holds explainability as a core design principle. This helps our customers understand the 'why' behind any particular output or decision produced by these products. That said, it is still possible for bias to creep into the ML models. We work diligently to detect and mitigate any model design issues in these products and encourage our customers to do their own evaluations, ask us questions, and work with us as needed to reduce the chances of these products causing harm to individuals or classes of individuals.

References Used in This Article

-

NIST SP 1270, Towards a Standard for Identifying and Managing Bias in Artificial Intelligence, (March, 2022)

-

NISTIR 8312, Four Principles of Explainable Artificial Intelligence (Sept., 2021)

-

AI Risk Management Framework - v1.0 (26Jan2023)

-

AI Risk Management Framework Companion Playbook - Public review draft (26Jan2023)

-

Introduction to the NIST AI RMF 1.0: An Explainer Video - 25Jan2023

-

Explainable AI (XAI): A Systematic Meta-Survey of Current Challenges and Future Opportunities - Preprint paper, published November, 2021

-

Interpretable Machine Learning - Book published in December, 2022

Additional Resources

-

Mitigating AI Bias - Draft NIST Project description (2022-08-18)

-

Mitigation of AI/ML Bias in Context - Project homepage

-

Treatment of unwanted bias in classification and regression machine learning tasks - ISO/IEC AWI TS 12791 - In work