ForgeRock Identity Platform: Docker Deployment From the Ground Up

Author: |

Patrick Diligent |

Created at: |

Oct 2021 |

Updated at: |

Jul 2024 |

+

Introduction

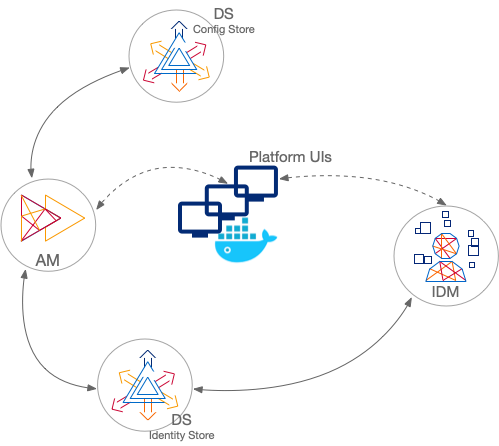

The ForgeRock documentation provides guidance in deploying the ForgeRock Identity Platform (Platform Setup Guide). A platform deployment is demonstrated with the ForgeOps project for Kubernetes, and the main cloud providers. The repository is hosted at https://github.com/ForgeRock/forgeops, and the project documented at DevOps 7.1. The project provides the tool for deploying the ForgeRock Platform in a simple way. However, especially for novices in the DevOps field, going beyond the tool in order to adapt it to real life requirements may not be that easy.

This article’s purpose is to look under the hood, as understanding the inner workings is essential to validate whether it fits the organization’s cloud deployment, and to determine the steps to achieve the goal. The focus of this paper is to demonstrate a platform deployment with Docker—outside of the Kubernetes framework—using ForgeRock’s base Docker images. We are also going to show how to build it up, step-by-step, and explain the required artifacts on the way.

As a support during this journey, you can clone the platform-compose samples repository [https://stash.forgerock.org/projects/PROSERV/repos/platform-compose]:

✗ git clone ssh://git@stash.forgerock.org:7999/proserv/platform-compose.gitIt will also be useful to check out the forgeops repository:

✗ git clone https://github.com/ForgeRock/forgeops

✗ cd forgeops

✗ git checkout release/7.1.0Note that the Bitbucket project is not a replacement for ForgeOps; it can help ease the ForgeOps learning curve, and is a sample that provides support to this article. It is not production-ready.

*Running the base Docker images

*

+ *The prerequisites*

The AM and DS base images need to be provided with a pre-built keystore and truststore in order to operate. The IDM base image is an exception, as by default, it generates the security stores. The generation of secrets and security stores is covered by the ForgeOps Secret Agent Operator, but since we can’t deploy it outside of Kubernetes, let’s have them generated by a basic product install. Since this is already covered by the documentation, let’s assume that you have already installed IDM, AM, and DS 7.1.0, locally. Collecting the security stores and keys from the different products, let’s assume that you have created this tree:

security

├── am

│ ├── am

│ │ ├── keystore.jceks

│ │ └── truststore

│ └── amster

│ ├── amster_rsa

│ ├── amster_rsa.pub

│ └── authorized_keys

├── ds

│ ├── keystore

│ └── keystore.pin

└── idm

├── keystore.jceks

├── keystorepass

├── storepass

└── truststoreThe security files for DS are located under opendj/config, for IDM they are under opendim/security, and for AM, they are found under the selected configuration folder in the security/keystores and security/keys/amster folders.

Create an environment variable for the location of the security folder root:

✗ export SECROOT_PATH=/path/to/securityLaunching the DS base image

When launching the base DS image with the command below, the container

displays the usage, with the key environment variables:

✗ docker run --rm -it --name ds gcr.io/forgerock-io/ds:7.1.0So let’s start the image with the recommended command, mounting the

secrets volume on to the security folder you have previously prepared:

✗ docker run --rm -it --env DS_SET_UID_ADMIN_AND_MONITOR_PASSWORDS=true --env DS_UID_ADMIN_PASSWORD=password --env DS_UID_MONITOR_PASSWORD=password --name ds --mount type=bind,src=$SECROOT_PATH/ds,dst=/opt/opendj/secrets gcr.io/forgerock-io/ds:7.1.0 start-dsOnce the initialization is complete, let’s have a peek inside:

✗ docker exec -it ds bash

forgerock@9327ce88ae18:/opt/opendj$ ldapsearch -Duid=admin -w password -X -b "" '&'

dn: uid=admin

objectClass: top

objectClass: person

...

dn: uid=monitor

...As you can see, this is a blank server with no profile applied. So, we can’t use this image as a CTS or Userstore yet, so we are going to look at this later on.

Launching the IDM base image

It is as simple as:

✗ docker run --rm -it -p 8080:8080 gcr.io/forgerock-io/idm:7.1.0You can then verify that the instance is functional by pointing the browser to http://localhost:8080/admin, using the default credentials. This instance is configured with an embedded DS repository (not recommended in production), and with generated security stores. The next step is to customise it to feed pre-generated security stores, and connect to an external DS repository.

About the Userstore image**

**

When installing AM the usual way, that is, with the Amster install command, or dropping the AM war into the webapp container and installing with the UI, the install creates the required entries to support policy configuration in the external stores. Then, whenever a new realm is created, either via the admin console, or via REST, the proper entries are also created to support the new realm.

This is a different story for File Based Configuration (FBC). First, remember that FBC configuration is not supported out of its usage in the ForgeRock Docker base image, so here we will use the AM image as is. When building the AM base image (browsing in the am-base Dockerfile), the following happens:

-

The AM server is installed with Amster, using an embedded configuration store, with an environment setting that causes the FBC to be generated on the way.

-

The setup also applies an additional configuration that is flushed as well to the FBC.

-

Once the setup is complete, the AM server is stopped, any trace of the embedded store is removed, the bootstrap configuration is patched to read the configuration from FBC,

-

And as a result, the FBC configuration is baked into the base image, ready to be deployed.

Since the install relies entirely on the embedded store; that is, no

external Userstore is present at install time, no base entries can be

loaded into any external server. The external Userstore therefore needs

to be pre-loaded with the base entries. In the ForgeOps release for 7.0,

this was done by the ldif-importer container, but it is now baked

directly into the idrepo image for 7.1. Furthermore, any subrealm should

be accompanied with the proper base entries. Adding a new realm with the

admin console will work. However, if the FBC with the new realm is baked

into a custom image, starting the server with a blank Userstore (an

instance without the required entries) will cause authorization issues

later, as AM does not create the necessary entries on the fly.

Creating the Userstore image**

**

Let’s build that image using the configuration from the ForgeOps project:

✗ cd /path/to/forgeops/docker/7.0/ds

✗ docker build --file idrepo/Dockerfile -t local/idrepo:7.1.0 .Let’s look at the build. In idrepo/Dockerfile, new entries are added to the AM identity-store profile from the idrepo/orgs.ldif file and external-am-datastore.ldif. Inspecting these files, you’ll see that there is also a provision for an alpha and bravo realm. This is the template that you need to use when providing a configuration for a new realm. In the Dockerfile file, the setup.sh script applies the needed profiles, and creates the necessary indexes. In fact, the idrepo is configured, as well as a CTS, external application/policy store (config profile) and IDM repo in addition to the AM idrepo profile, so you could just deploy this image for all. Let’s start it :

✗ docker network create net

✗ docker run --rm -it --network net --env DS_SET_UID_ADMIN_AND_MONITOR_PASSWORDS=true --env DS_UID_ADMIN_PASSWORD=password --env DS_UID_MONITOR_PASSWORD=password --mount type=bind,src=$SECROOT_PATH/ds,dst=/opt/opendj/secrets --name idrepo local/idrepo:7.1.0 start-dsThen, let’s play with it. First, load a few user entries, then perform

an ldapsearch:

✗ docker exec -it idrepo /opt/opendj/bin/make-users.sh 10

✗ docker exec -it idrepo ldapsearch -w password -z 1 -b "ou=identities" 'fr-idm-uuid=*' cn givenname mail uid

dn: fr-idm-uuid=18966e8c-461c-4620-aad1-6261063913ef,ou=People,ou=identities

cn: Aaccf Amar

givenname: Aaccf

mail: user.0@example.com

uid: user.0

✗ docker exec -it idrepo ldapsearch -w password -b "" -s base '&' namingcontexts

dn:

namingcontexts: uid=admin

namingcontexts: ou=tokens

namingcontexts: uid=monitor

namingcontexts: dc=openidm,dc=forgerock,dc=io

namingcontexts: ou=am-config

namingcontexts: ou=identities

namingcontexts: uid=proxy*Note:* Due to the -z 1 command line option, the results also display

the following message:

# The LDAP search request failed: 4 (Size Limit Exceeded)

# Additional Information: This search operation has sent the maximum of 1 entries to the clientCreating the CTS image

Build the CTS image with:

✗ cd /path/to/forgeops/docker/7.0/ds

✗ docker build --file cts/Dockerfile -t local/cts:7.1.0 .And run it:

✗ docker run --rm -it --network net --env DS_SET_UID_ADMIN_AND_MONITOR_PASSWORDS=true --env DS_UID_ADMIN_PASSWORD=password --env DS_UID_MONITOR_PASSWORD=password --mount type=bind,src=$SECROOT_PATH/ds,dst=/opt/opendj/secrets --name cts local/cts:7.1.0 start-dsWe are now ready to launch the AM base image.

Launching the AM base image

This is a crucial point: the AM base image has the bare minimum configuration, with the purpose that customizations add new configurations, but never remove them. The base image at this stage is therefore not fully operational; in particular, you won’t be able to implement OIDC flows as the encryption/signing key mappings are missing.

Inspecting openam/samples/docker/images/am-base/docker-entrypoint.sh (in AM-7.1.0.zip) is very instructive, as it contains all the environment settings. In this exercise, let’s use non-SSL connections as a first step, and provide the details for the cts and idrepo stores, the password, and an encryption key.

✗ docker run --rm -it --network net --env AM_STORES_USER_SERVERS=idrepo:1389 --env AM_STORES_CTS_SERVERS=cts:1389 --env AM_STORES_SSL_ENABLED=false --env AM_PASSWORDS_AMADMIN_CLEAR=password --env AM_ENCRYPTION_KEY=C00lbeans --mount type=bind,src=$SECROOT_PATH/am,dst=/var/run/secrets -p 8081:8080 --name am gcr.io/forgerock-io/am-base:7.1.0Create this entry in the /etc/hosts file:

127.0.0.1 amAnd point the browser to: http://am:8081/am, credentials: amadmin/password. This should load the dashboard. Navigate to the identities tab;you should see all the users you have created previously.

At this point, browse the different configuration tabs; you can indeed confirm that the server has minimal configuration.

Evolving to a platform deployment

You can use the platform-compose sample to deploy a fully functional platform (to play with as a sandbox); or, if your laptop has sufficient resources to run Minikube, and you are comfortable with Kubernetes, use ForgeOps to deploy the platform. So, the journey could stop here for you. But if you are curious about establishing this setup from the ground up, then read on. This article focuses solely on a pure Docker deployment. All the steps described further on are materialized in the platform-compose project, so it is a good idea to refer to while reading through the instructions.

AM image customization

The ForgeRock product base images are the empty canvas on which to lay out the elaborate configuration. It is generally advised to follow the immutable model; therefore, no configuration changes in production, but rather, baked within the deployed images, a configuration change requires a new promotion into production. As with the IDM image, we build a new AM image from the AM base image. What we have to do then is overlay a new configuration in the derived image, and that is exactly what is happening in the ForgeOps model.

Docker compose file*

*

First, to preserve our little fingers, let’s automate the deployment by creating a compose file (docker-compose.yaml) , a .env file and folder structure as shown below. Copy /path/to/forgeops/docker/7.0/ds to the folder that holds the security/ folder, leading to this:

✗ cp -r /path/to/forgeops/docker/7.0/ds . # Copy to the same folder containing docker-compose.yaml

✗ tree -La 1

.

├── .env

├── docker-compose.yaml

├── ds

└── security+ Create the docker-compose.yaml file with the following text that automates the command lines we just used to build and launch the containers:

services:

idrepo:

build:

context: ds

dockerfile: idrepo/Dockerfile

image: local/idrepo:7.1.0

container_name: idrepo.local

environment:

- DS_SET_UID_ADMIN_AND_MONITOR_PASSWORDS=true

- DS_UID_ADMIN_PASSWORD=password

- DS_UID_MONITOR_PASSWORD=password

ports:

- 389:1389

volumes:

- ./security/ds:/opt/opendj/secrets

command: start-ds

cts:

build:

context: ds

dockerfile: cts/Dockerfile

image: local/cts:7.1.0

container_name: cts.local

ports:

- 1389:1389

environment:

- DS_SET_UID_ADMIN_AND_MONITOR_PASSWORDS=true

- DS_UID_ADMIN_PASSWORD=password

- DS_UID_MONITOR_PASSWORD=password

command: start-ds

volumes:

- ./security/ds:/opt/opendj/secrets

am:

image: gcr.io/forgerock-io/am-base:7.1.0

container_name: am.local

environment:

- AM_STORES_USER_SERVERS=idrepo:1389

- AM_STORES_CTS_SERVERS=cts:1389

- AM_STORES_SSL_ENABLED=false

- AM_PASSWORDS_AMADMIN_CLEAR=password

- FQDN=${FQDN}

- AM_ENCRYPTION_KEY=C00lbeans

ports:

- 8081:8080

volumes:

- ./security/am:/var/run/secretsCreate the .env file:

COMPOSE_PROJECT_NAME=playground

FQDN=openam.example.comAdd this line to /etc/hosts:

127.0.0.1 openam.example.comThen run:

✗ docker compose build

✗ docker compose up -dAnd watch the console output with:

✗ docker compose logs -fOnce the initialization is complete, point the browser to

http://openam.example.com:8081/am.

The AM custom image

Before we can configure the server further, we need to create the base

that lets us preserve the configuration after a redeployment. For that,

we create a downstream image from the AM base image - which is taken

from the ForgeOps project; and you clear the config/ folder as a

temporary measure:

# Copy to the same folder containing docker-compose.yaml

✗ cp -r /path/to/forgeops/docker/7.0/am .

✗ mkdir -p config

✗ rm -rf am/config/* # In case there is content

# (this happens if forgeops has been deployed once...)

✗ touch am/config/.not_empty # this allows git to initialise on a non empty folderNotice the following points in the Dockerfile: __

-

The image is based on gcr.io/fogerock-io/am-base:7.1.0.

-

The config/ folder is copied over to /home/forgerock/openam/config, where the FBC resides.

-

The openam folder is version controlled which allows to export only changes to the FBC. The export is accomplished with the export-diff.sh script. The export.sh script exports everything, except the boot.json file, which should never be touched.

In the docker-compose.yaml file, replace the am service spec with

this:

am:

build: am

image: local/am:7.1.0

container_name: am.local

environment:

- AM_STORES_USER_SERVERS=idrepo:1389

- AM_STORES_USER_CONNECTION_MODE=ldap

- AM_STORES_CTS_SERVERS=cts:1389

- AM_STORES_SSL_ENABLED=false

- AM_PASSWORDS_AMADMIN_CLEAR=password

- FQDN=${FQDN}

- AM_ENCRYPTION_KEY=C00lbeans

ports:

- 8081:8080

volumes:

- ./security/am:/var/run/secretsIn order to preserve directory data after redeployment, let’s add a volume to the CTS and IDREPO. Add the idrepo-data line shown below to the idrepo service in the volumes spec:

idrepo:

[...]

volumes:

- idrepo-data:/opt/opendj/data

- ./security/ds:/opt/opendj/secrets

command: start-dsand the line with cts-data to the volumes spec for the CTS:

cts:

[...]

volumes:

- cts-data:/opt/opendj/data

- ./security/ds:/opt/opendj/secretsAdd a volumes section at the end of the file:

volumes:

idrepo-data:

cts-data:Inspecting the FBC

Bring the deployment up (docker compose up -d), then open a terminal into the am container:

✗ docker exec -it am.local bash

✗ cd /home/forgerock/openam

✗ git status

On branch master

Changes not staged for commit:

(use "git add <file>..." to update what will be committed)

(use "git restore <file>..." to discard changes in working directory)

modified: config/boot.json

...Authenticate to the admin console and create an authentication tree, then run the git status command again. The following text shows an example of the modifications to the FBC after creating the new tree:

Untracked files:

(use "git add <file>..." to include in what will be committed)

config/services/realm/root/authenticationtreesservice/1.0/organizationconfig/default/

config/services/realm/root/corsservice/1.0/globalconfig/default/

config/services/realm/root/datastoredecisionnode/1.0/globalconfig.json

config/services/realm/root/datastoredecisionnode/1.0/instances.json

config/services/realm/root/datastoredecisionnode/1.0/organizationconfig/default.json

config/services/realm/root/datastoredecisionnode/1.0/organizationconfig/default/

config/services/realm/root/datastoredecisionnode/1.0/pluginconfig.json

config/services/realm/root/passwordcollectornode/1.0/globalconfig.json

config/services/realm/root/passwordcollectornode/1.0/instances.json

config/services/realm/root/passwordcollectornode/1.0/organizationconfig/default.json

config/services/realm/root/passwordcollectornode/1.0/organizationconfig/default/

config/services/realm/root/passwordcollectornode/1.0/pluginconfig.json

config/services/realm/root/usernamecollectornode/1.0/globalconfig.json

config/services/realm/root/usernamecollectornode/1.0/instances.json

config/services/realm/root/usernamecollectornode/1.0/organizationconfig/default.json

config/services/realm/root/usernamecollectornode/1.0/organizationconfig/default/

config/services/realm/root/usernamecollectornode/1.0/pluginconfig.jsonExit from the am container bash session:

✗ forgerock@7ca6734fbeba:~$ exitNext, run the export-diff.sh script and retrieve the exported configuration:

✗ docker exec -it am.local ls /home/forgerock # view the folder contents to verify

# that no .tar file is present

✗ docker exec -it am.local /home/forgerock/export-diff.sh

✗ docker exec -it am.local ls /home/forgerock # Verify the .tar file is created

✗ docker cp am.local:/home/forgerock/updated-config.tar .

✗ cd am; tar xf ../updated-config.tarThen, bring the deployment down, rebuild the am image, then up again:

✗ cd .. # return to the folder where the .env file is present

✗ docker compose down

✗ docker compose build am

✗ docker compose up -dSince we have added a persistent volume to the CTS, you should still be

authenticated. Log out, then authenticate again, and navigate to the

authentication trees page. The tree you’ve just defined should still be

there.

Dynamic configuration

So we looked at the FBC, the static configuration. What about dynamic data? If you create an OAuth2 client, the configuration is pushed to the external application store, which resides in the idrepo. Since we have created a persistent volume for the idrepo, the application and policy data survives across redeployments. However, at some stage, this configuration needs to be imported when redeploying onto a blank canvas. Let’s copy the Amster image over from the ForgeOps project:

# Copy to the same folder containing docker-compose.yaml

✗ cp -r /path/to/forgeops/docker/7.0/amster .

✗ mkdir -p config

✗ rm -rf config/* # In case there is content

# (this happens if forgeops has been deployed once…)Edit the amster/Dockerfile to add comments as shown, so that it looks like:

FROM gcr.io/forgerock-io/amster:7.1.0

# At the time of this writing, due to a change in the Debian packaging,

# the apt-get install is broken, and so for the purpose of this exercise, the import upload

# feature is not available.

# USER root

# ENV DEBIAN_FRONTEND=noninteractive

# ENV APT_OPTS="--no-install-recommends --yes"

# RUN apt-get update \

# && apt-get install -y openldap-utils jq inotify-tools \

# && apt-get clean \

# && rm -r /var/lib/apt/lists /var/cache/apt/archives

USER forgerock

ENV SERVER_URI /am

COPY --chown=forgerock:root . /opt/amster

ENTRYPOINT [ "/opt/amster/docker-entrypoint.sh" ]Amster is instructed to export only dynamic configuration, with the following parameter seen in amster/export.sh:

realmEntities="OAuth2Clients IdentityGatewayAgents J2eeAgents WebAgents SoapStsAgents Policies CircleOfTrust Saml2Entity Applications"Edit amster/export.sh and amster/import.sh, replace all occurrences of http://am:80/am with http://am:8080/am. For example, you could use the following commands:

✗ sed -i -e "s/:80/:8080/g" amster/export.sh

✗ sed -i -e "s/:80/:8080/g" amster/import.shIn the Kubernetes context , the am pod is fronted by a service mapping port 80 to 8080. Out of Kubernetes, the port is the Docker container port, which is 8080.

Add the following amster service spec to the docker-compose.yaml before the volumes at the end:

amster:

image: local/amster:7.1.0

build: amster

container_name: amster.local

volumes:

- ./security/amster:/var/run/secrets

command: exportAnd:

✗ docker compose build amsterDeploy amster:

✗ docker compose up -d amsterCreate an OAuth2 client via the admin console, then run:

✗ docker exec -it amster.local /opt/amster/export.sh

✗ mkdir amster/config

✗ docker cp amster.local:/var/tmp/amster/realms/root/ amster/configBrowse into amster/config/root/OAuth2Clients, a JSON file should be present for the client you just created.

To import:

✗ docker cp amster/config amster.local:/opt/amster/config

✗ docker exec -it amster.local /opt/amster/import.shThis process is automated in the platform-compose samples, following the same model as ForgeOps, but using simpler scripts to help understand the principles behind.

Platform configuration

At this stage, the AM instance is not complete - and not fully operational. For example, if you create an OAuth2 Provider, an OIDC client, and initiate an Authorization Grant flow, then the access_token endpoint fails with the following message:

"error_description": "org.forgerock.secrets.NoSuchSecretException: No secret configured for purpose oauth2.oidc.idtoken.signing",The AM base image provides only the bare minimum, so that derived images can always add configuration to the FBC, and never have to remove anything from it. The ForgeOps project fortunately provides initial configuration to bridge this gap. At this stage, you have a choice:

-

Take the ForgeOps configuration as is; it has all the necessary artifacts to configure a platform deployment with a shared idrepo between IDM and AM,

-

Or, your deployment diverges from the ForgeOps architecture, so you would rather pick the bare minimum that fits your architecture.

With option 1, it is just a matter of copying the whole configuration over:

✗ rm -rf am/config

✗ cp -r /path/to/forgeops/config/7.0/cdk/am/config am

✗ docker compose up -dIf you opt for option 2, then this is the bare minimum to get this OIDC flow working:

✗ tree -L 4 am/config

am/config

└── services

└── realm

└── root

├── filesystemsecretstore

├── keystoresecretstore

├── oauth2provider

└── scriptingserviceThen, proceed to the Platform Setup guide (https://backstage.forgerock.com/docs/platform/7.1/platform-setup-guide/#deployment2) to configure AM for the platform, or add your own specific configuration.

At this stage, you should also inspect all of the FBC configuration provided by ForgeOps to determine what is needed to get your deployment working.

Upgrading the configuration

After baking all of the ForgeOps configuration (using option 1discussed previously), if you inspect it via the admin console, you will find that most of it is configured with the proper FQDN value, thanks to the placeholders in the configuration. For example, in the validation service JSON file, the pattern is defined as:

"validGotoDestinations" : [

"&{am.server.protocol|https}://&{fqdn}/*?*"

]The issue will arise when exporting the configuration: the placeholders are lost; instead, the expanded values are exported (this differs with IDM’s behavior, as one can directly enter placeholders in the admin UI). In order to reinstate the placeholders, the config upgrader tool, implemented by a Docker container, is used: gcr.io/forgerock-io/am-config-upgrader:7.1.0.

The tool needs to be provided with rules, and in the ForgeOps project, this is provided with the file: config/am-upgrader-rules/placeholders.groovy. The container expects two mounted volumes, one for the configuration to upgrade, and one for the rules.

The rules provided in ForgeOps are the minimum to ensure the portability

of the configuration, and addresses environment values used in the

AM-based image; for example, this rule for the

sunIdentityRepositoryService:

forNamedInstanceSettings("dsameuser", replace("userPassword").with("&{am.passwords.dsameuser.hashed.encrypted}")))),Ensures that the configuration is in line with the following setting in the AM base image: (look in the AM expanded product archive openam/samples/docker/images/am-base/docker-entrypoint.sh):

export AM_PASSWORDS_DSAMEUSER_HASHED_ENCRYPTED=$(echo $AM_PASSWORDS_DSAMEUSER_CLEAR | am-crypto hash encrypt des)The command issued by the config upgrader is (in the am-config-upgrader script):

/home/forgerock/amupgrade/amupgrade --inputConfig /am-config/config/services --output /am-config/config/services --fileBasedMode --prettyArrays --clean false --baseDn ou=am-config \$(ls /rules/*)"There is currently a single file in the am-upgrader-rules/ folder (mounted on /**rules in the container); in order to add your own customizations, this can be done by providing a separate rules file in addition to the default one. Note that these rules are in fact implemented by Groovy scripts.

So, let’s say that you have exported the configuration, copied and extracted the resulting tar archive in the host file system, and copied the rules locally in the project folder as well, looking like this below. Make sure to copy the services/tree (expanded from the exported archive (updated-config.tar) into upgrader/config/; these are the files that the tool is going to process:

upgrader

└── config

└── services/realms/...

am-upgrader-rules

└── placeholders.groovyRun the following command:

✗ docker run --user :$UID --volume /path/to/upgrader:/am-config --volume /path/to/am-upgrader-rules:/rules gcr.io/forgerock-io/am-config-upgrader:7.1.0 sh -c "/home/forgerock/amupgrade/amupgrade --inputConfig /am-config/config/services --output /am-config/config/services --fileBasedMode --prettyArrays --clean false --baseDn ou=am-config \$(ls /rules/*)

Reading existing configuration from files in /am-config/config/services...

Modifying configuration based on rules in [/rules/placeholders.groovy]...

reading configuration from file-based config files

Upgrade Completed, modified configuration saved to /am-config/config/servicesIn platform-compose, this functionality is provided by the script: compose/sandbox/bin/upgrade-config.sh which is called by save-config.sh. The project also provides two other scripts: export-config.sh, and init-config.sh. export-config.sh stashes the exported data in an intermediary area, to give some opportunity for inspecting it. Save-config.sh copies the exported configuration to the versioned configuration, upgrading the placeholders on the fly (upgrade-config.sh). At this stage, the changes are ready to be committed. Then, init-config.sh copies this configuration to the Docker config folders, ready for building the new images and re-deploying.

IDM image customization

Configuring IDM for a platform setup is a bit tricky, as in this setup, IDM only supports bearer token authorization, so the IDM admin console can’t be used anymore. To simplify the process, we first configure IDM with the standard authentication scheme in order to get access to the embedded admin UI, focusing on getting the basic configuration right, mainly the DS repository (which should use an explicit mapping for users), and the boot properties. Having access to the admin UI will help you refine the configuration.

Let’s reuse the ForgeOps IDM image:

# Copy to the same folder containing docker-compose.yaml

✗ cp -r /path/to/forgeops/docker/7.0/idm .

✗ mkdir -p idm/conf

✗ rm -rf idm/conf/* # In case there is content

# (this happens if forgeops has been deployed once...)Let’s look at the Dockerfile, it is all happening with this line:

COPY --chown=forgerock:root . /opt/openidmThis copies over the conf/ and resolver/ folders into the image. So, after launching the image, you should be able to login to the admin console, make some changes. In order to preserve the changes after redeployment, typically, all it needs is to copy the conf/ folder in the container back to the host file system. In this process, there is one rule of thumb: always provide password values with a configuration placeholder to allow the transport of the configuration from one environment to the other.

If we copy over the ForgeOps configuration as is, you will not be able to log in into the IDM admin console, as this configures bearer authentication only; the proper way is to use the login UI, but we are not there yet. So as a starter, let’s do this:

-

Copy the default configuration from the IDM 7.1 zip package into idm/conf.

-

Get the repository configuration from the Platform Setup guide: repo.ds.json.

-

Edit repo.ds.json, so that the connection to the DS idrepo is non-secure.

Note that “embedded” and “security” are not strictly required; however, providing them brings some clarity about the effective setup:

"embedded": false,

"maxConnectionAttempts" : 5,

"security": {

"keyManager": "none",

"trustManager": "jvm"

},

"ldapConnectionFactories": {

"bind": {

"connectionSecurity": "none",

"connectionPoolSize": 50,Also verify few configuration settings in the file:

-

The dnTemplate property in all the mappings should target the correct branch in the directory server. The RDN of the idrepo mapping dc=io, and if you ever copied the explicit mapping from the IDM zip package, it is dc=com, for example, so this should be changed.

-

Verify all the placeholders. The compose file below provides environment variables, and should be in line with what is configured in repo.ds.json. Be aware that resolver/boot.properties also provide environment variables, and that if any is missing in the shell environment (e.g., provided in the Docker compose file), then the value is taken from the properties file (and could be the wrong value).

Let’s add a service spec for IDM in the compose file:

idm:

build: idm

image: local/idm:7.1.0

container_name: idm.local

volumes:

- ./security/idm:/var/run/secrets/idm

ports:

- 8080:8080

environment:

- OPENIDM_REPO_HOST=idrepo

- OPENIDM_REPO_PORT=1389

- OPENIDM_REPO_PASSWORD=password

- OPENIDM_REPO_USER=uid=admin

- OPENIDM_SECONDARY_REPO_HOST=idrepo

- OPENIDM_KEYSTORE_LOCATION=/var/run/secrets/idm/keystore.jceks

- OPENIDM_TRUSTSTORE_LOCATION=/var/run/secrets/idm/truststore

- OPENIDM_ADMIN_PASSWORD=openidm-adminThen:

✗ docker compose build idm

✗ docker compose up -dAccess the IDM admin console at http://localhost:8080/admin, enter the usual credentials (openidm-admin/openidm-admin), then navigate to MANAGE → USER; all the users loaded with the make-users.sh script are listed.

Bringing the platform UIs in

At this point, we have established a base from which you can further configure IDM and AM to deploy the Platform using a shared repository. This is done by following the instructions at: https://backstage.forgerock.com/docs/platform/7.1/platform-setup-guide/#deployment2, making sure to secure the configuration at each step by exporting it, saving it, and baking it into new Docker images.

The platform setup is a delicate process—one single miss, and the whole scaffold falls down, so proceed carefully, step-by-step, understanding the underlying dependencies in the configuration. Also, this article should save you time: Understanding and Troubleshooting ForgeRock Platform Integration.

Note that the placeholders.groovy script will undo some of the configuration, unless you remove some of the rules; in particular, the validation service:

// forRealmService("validationService",

// forRealmDefaults(where(isAnything(),

// replace("validGotoDestinations")

// .with(["&{am.server.protocol|https}://&{fqdn}/*?*"]))),

// forSettings(

// replace("validGotoDestinations")

// .with(["&{am.server.protocol|https}://&{fqdn}/*?*"])

// )),This is because ForgeOps deploys an ingress controller to front the services, and therefore, there is a single base URI for all the endpoints. Here, as we are following the Platform Setup guide, the services are on different URIs.

Once the setup is complete, you can then launch the platform UIs, as explained at https://backstage.forgerock.com/docs/platform/7.1/platform-setup-guide/#platform-ui.

To integrate the launch of the platform UIs in the compose configuration, add this at the top of the file, before the services block:

x-common-variables-properties: &platform-properties

- AM_URL=http://openam.example.com:8081/am

- AM_ADMIN_URL=http://openam.example.com:8081/am/ui-admin

- IDM_REST_URL=http://openidm.example.com:8080/openidm

- IDM_ADMIN_URL=http://openidm.example.com:8080/admin

- IDM_UPLOAD_URL=http://openidm.example.com:8080/upload

- IDM_EXPORT_URL=http://openidm.example.com:8080/export

- ENDUSER_UI_URL=http://enduser.example.com:8888

- PLATFORM_ADMIN_URL=http://admin.example.com:8082

- ENDUSER_CLIENT_ID=end-user-ui

- ADMIN_CLIENT_ID=idm-admin-ui

- THEME=default

- PLATFORM_UI_LOCALE=enThen, add the services specs:

login-ui:

image: gcr.io/forgerock-io/platform-login-ui:7.1.0

environment: *platform-properties

container_name: loginui.local

ports:

- 8083:8080

admin-ui:

image: gcr.io/forgerock-io/platform-admin-ui:7.1.0

environment: *platform-properties

container_name: adminui.local

ports:

- 8082:8080

enduser-ui:

image: gcr.io/forgerock-io/platform-enduser-ui:7.1.0

environment: *platform-properties

container_name: enduserui.local

ports:

- 8888:8080About the platform-compose samples

As mentioned earlier, the samples are available at https://stash.forgerock.org/projects/PROSERV/repos/platform-compose . It was mainly built using the steps exposed in this paper, and keeping reference with ForgeOps, and differs from ForgeOps in the following ways:

-

It is not for production deployments, and based on Docker only; ForgeOps provides mature tools to deploy on Kubernetes and main Cloud providers.

-

The services are fronted by an nginx reverse proxy, where the rules mimic the ingress controller rules configured in ForgeOps. Thus, all platform UIs and admin consoles are accessed under the same domain (by default platform.example.com).

-

The project differs from ForgeOps in that it does not use secure connections to the DS stores. To establish SSL connections, renewing the deployment key is required, and the proper server certificates should be generated, with the correct hostnames, and imported into the AM and IDM truststores accordingly. In ForgeOps, this task is performed by the Secret Agent operator.

-

Everything uses ‘password’ as the default password. In ForgeOps the DS account passwords are updated using the ldif-importer container.

-

Finally, platform-compose uses simplistic scripts to demonstrate the configuration versioning.

Conclusion

Hopefully, this (long) demonstration should help you getting started with the ForgeRock Platform deployments into the Cloud. Remember that the project (platform-compose) is not aimed at production, and that it is not a replacement to ForgeOps, rather, it is oriented towards a sandbox to play with and to discover the ForgeRock base image features, and gain an initial understanding of ForgeOps.

+ +

Other Articles by This Author

![]() IDM:

Zero Downtime Upgrade Strategy Using a Blue/Green Deployment

[.badge-category__name#Architecture#]

IDM:

Zero Downtime Upgrade Strategy Using a Blue/Green Deployment

[.badge-category__name#Architecture#]

This article provides instructions for a zero downtime upgrade strategy for IDM using a blue/green deployment. Introduction This article is a continuation of an article about upgrading AM and IG using a in-place or by leveraging the migration service. As many deployments have constraints around this approach (zero downtime, immutable, …), a parallel deployment approach or a blue/green strategy can be leveraged for upgrading ForgeRock IDM servers. This corresponds to the Unit 4: IDM Upgrade …

![]() An Incremental Migration with

IDM

[.badge-category__name#Integrations#]

An Incremental Migration with

IDM

[.badge-category__name#Integrations#]

Introduction [image] Traditionally, ForgeRock® Identity Management (IDM) upgrades are handled either in-place or by leveraging the migration service. Both approaches require removing the IDM instance from update traffic. This will be suitable if the migration can complete in a reasonable time, often overnight, when load is at its lowest. But, there will be a point where a large enough dataset will incur a migration time which exceeds the organization’s expectation. Running a migration while up…

Introduction One new major feature introduced in ForgeRock Identity Gateway 7.0 is standalone deployment in addition to deployment into webapp containers such as Apache Tomcat. This is accompanied with a major shift in the underlying HTTP server framework. Instead of dispatching incoming requests to worker threads - as this happens with webapp containers - a standalone IG server dispatches requests onto a single worker thread, following the Event Driven Reactive pattern; this allows scaling the …

By Patrick Diligent and Laurent Vaills Introduction In the previous paper, Async Programming 101, we examined the shift from synchronous programming to asynchronous, and highlighted an approach to convert your legacy code to this paradigm. Though adopting an asynchronous programming idiom feels unnatural and leads to challenges, the ultimate reward is dramatically improved performance. In this blog, we are going to explore further advanced constructs that were not tackled in the previous blo…